Unify the legacy ERP and the operational data into one model

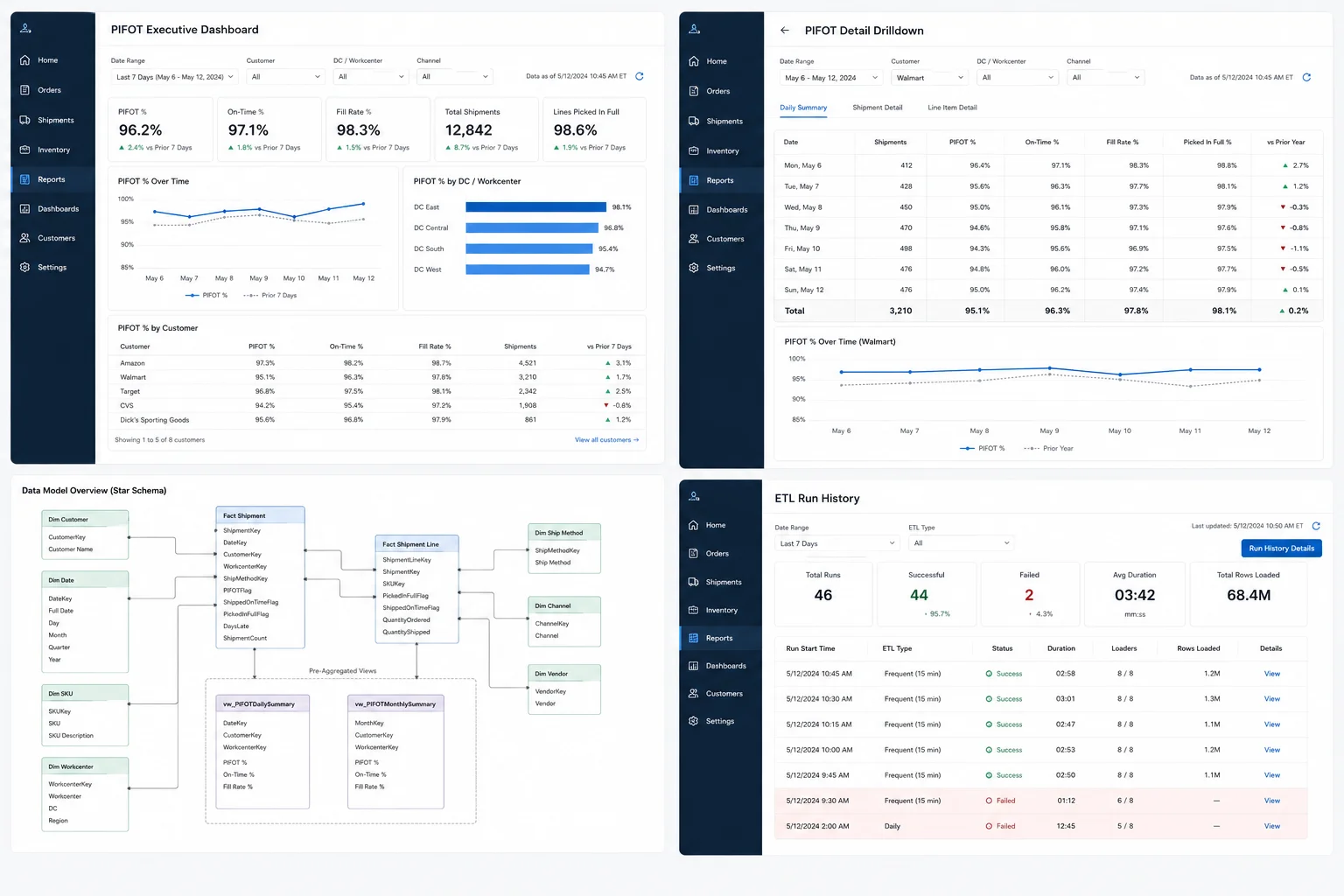

Orders, customers, and historical financial data live in the legacy ERP. Shipment status, ship windows, validation outcomes, and customer-specific rules live in the modern operational system. Neither side alone can answer "did we hit PIFOT this week." An ETL service reads from both, lands the data in a clean star schema (eleven dimension tables, seven fact tables), and exposes pre-aggregated views for the queries that run most often.